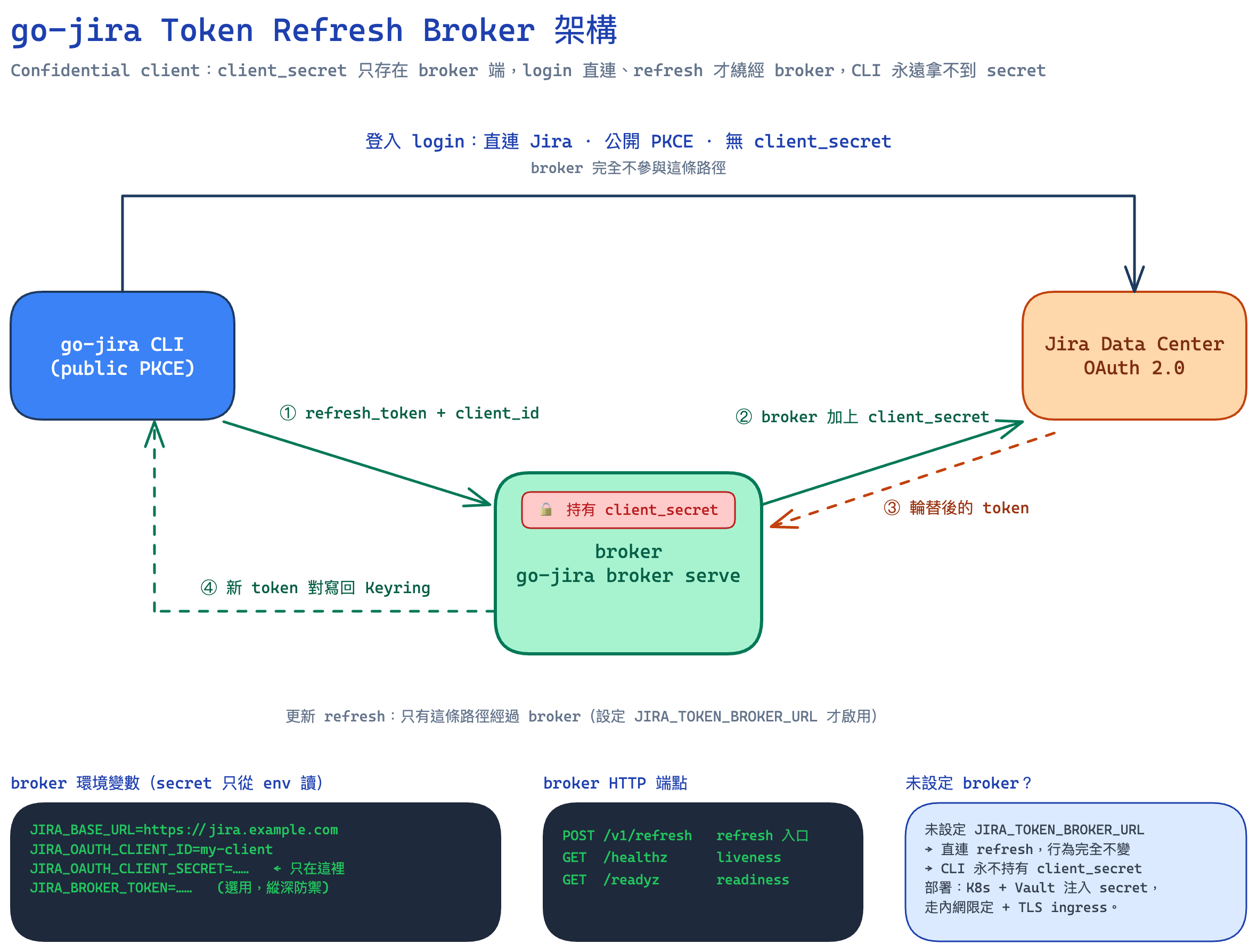

More and more developers let AI CLI tools like Claude Code run commands, look things up, and tidy up afterwards right on their own development machines. Wiring that AI workflow into Jira makes it even more powerful: the AI can look up issues, update statuses, leave comments, and map commit messages back to tickets. The star of this post, go-jira (https://github.com/appleboy/go-jira), is a Jira CLI built for exactly this scenario. But there’s a security question that keeps getting underrated — how does the CLI authenticate to Jira?

The most common answer historically is a PAT (Personal Access Token). It’s simple, but on an AI development machine it carries two very real risks:

- The AI can read it by accident. A PAT usually lives in

.env, a shell rc file, or some config file. The moment you let an AI agent “freely explore the filesystem” on that machine, this long-lived token — which carries your full account permissions — can get pulled into the context, or even written out into some piece of output. - A file that lives forever is an exposure surface that lives forever. A PAT doesn’t rotate. Once leaked, it stays valid until you manually revoke it. We’ve all heard the stories: synced to the cloud, swept into a backup, accidentally committed into a repo.

That’s why “switching CLI auth from a PAT to Jira OAuth” has been pulled back into the spotlight lately. This post documents how the new go-jira uses OAuth Login + refresh tokens to tuck tokens into the operating system’s Keyring, so developers can obtain a token conveniently and store it safely — and so AI CLIs like Claude Code can interact with Jira in a much safer way.

[Read More]Note: go-jira’s OAuth only supports Jira Data Center, not Jira Cloud (the two use different OAuth flows).